Lucas Dionisopoulos

Here's my quick gist:

- Initially studied finance (WashU) and worked in investment banking for 2 years

- Knew I wanted a career change... so I took a gap year where I mostly studied (but also had a bit of fun)

- Worked on research in grad school (UCSD) where I focused on RL for LLMs with PEARLS Lab. Gave a talk at NeurIPS (FoRLM Workshop) and published a paper at ICML

- Currently: Jack of all trades at Hadrian (Special Projects)

If you're in LA / just want to chat - you should reach out!

Blogs

Some Writing

- Why Pivot? My motivation for leaving finance, taking a gap year to study ML, and starting grad school.

- My Hours as an Investment Banking Analyst. I tracked hours during my 2 years in banking and made some neat charts.

Research

Some Research

Primarily worked on RL + LLMs in PEARLS Lab. Previously worked with UCSD ProgSys. My thesis ties these two together.

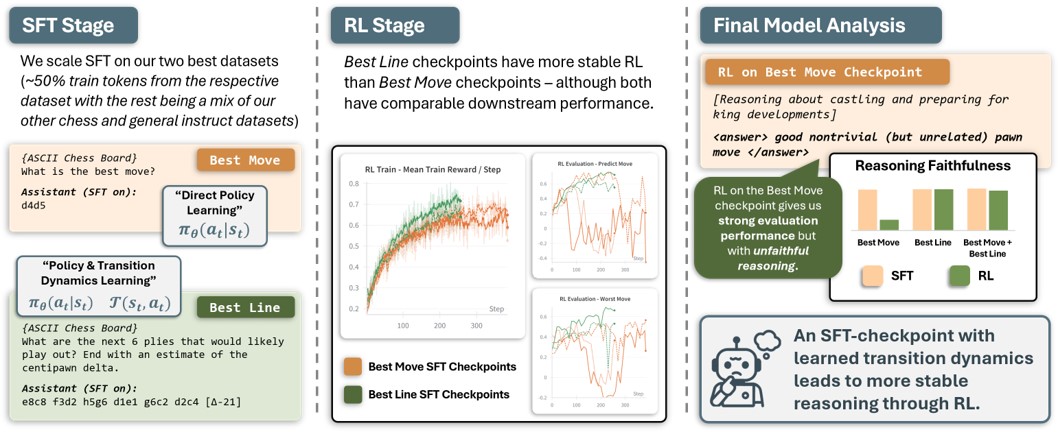

How Reasoning Evolves from Post-Training Data: An Empirical Study Using Chess

NeurIPS 2025 FoRLM Workshop (Oral)

ICML 2026

Lead author. Outperformed state-of-the-art open-source reasoning models in chess through SFT and RL on a 7B-parameter language model. The key focus of this work was to study how fine-tuning influenced post-RL reasoning using custom theoretically-inspired datasets.

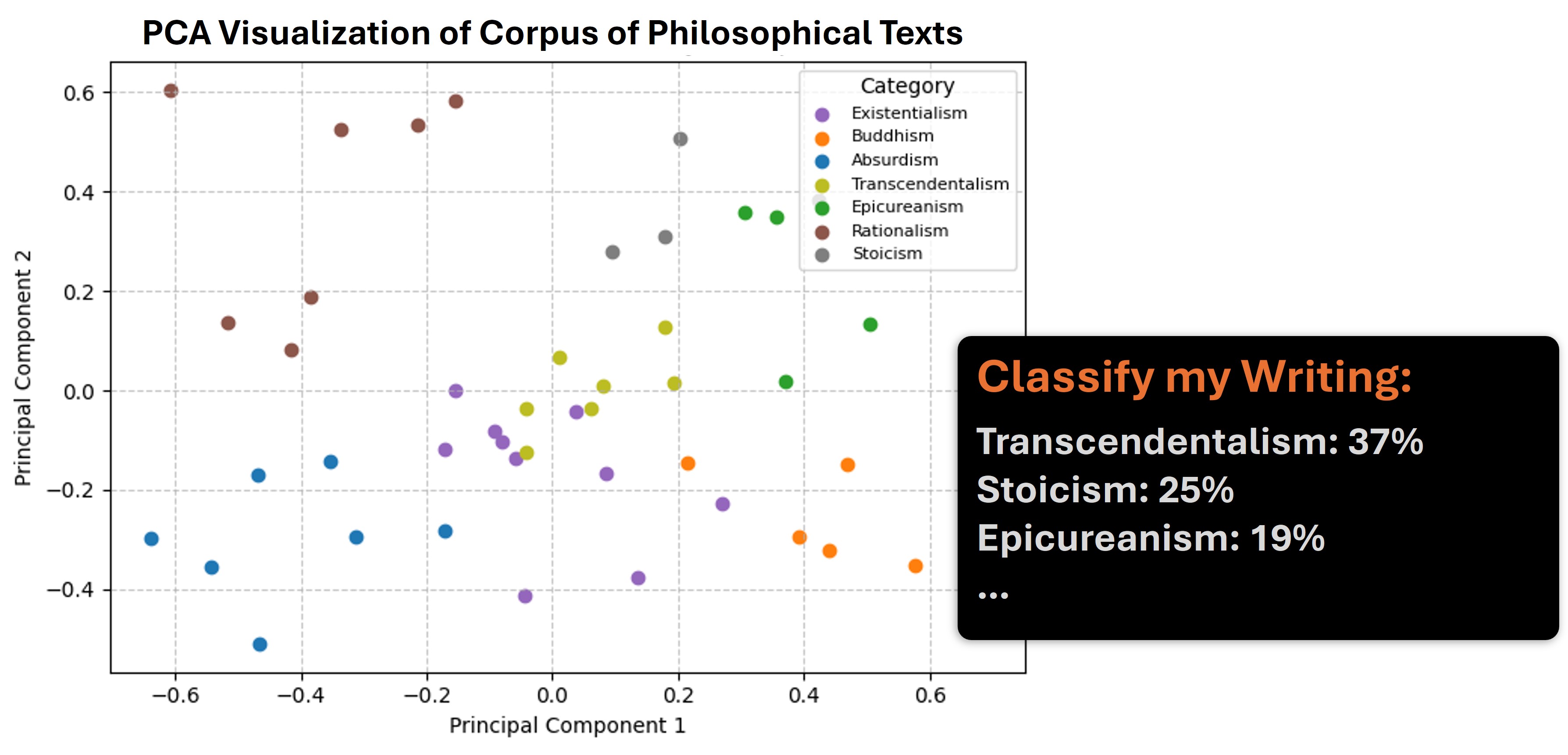

Philosophize That

Neat project I whipped up to train a philosophy classifier using paragraph-level text embeddings (I then classified my own writing).

Projects

Some Technical Projects

RL Benchmarking Gym

Built a clean, hackable RL gym engine from scratch to implement and ablate algorithms from baseline to SoTA.

Dungeon Coder

Full-stack webapp combining image generation and image-to-3D asset models to create custom assets you can place in interactive dungeons or 3D print. Includes a dungeon generator that turns a prompt into a 3D dungeon using classical algorithms plus LLM agents. Built with React, Three.js, and self-hosted CV models.

SLAM-Based Autonomy for 4-Wheel Rover

Developed an autonomy stack for an uncalibrated 4-wheel differential-drive rover, implementing vision, EKF-SLAM from scratch, and path planning algorithms. Built with ROS2, OpenCV, and Python.

Reinforcement Learning Driver

Created and optimized a racing game in Pygame and taught an RL agent to drive it using Double Q-learning.

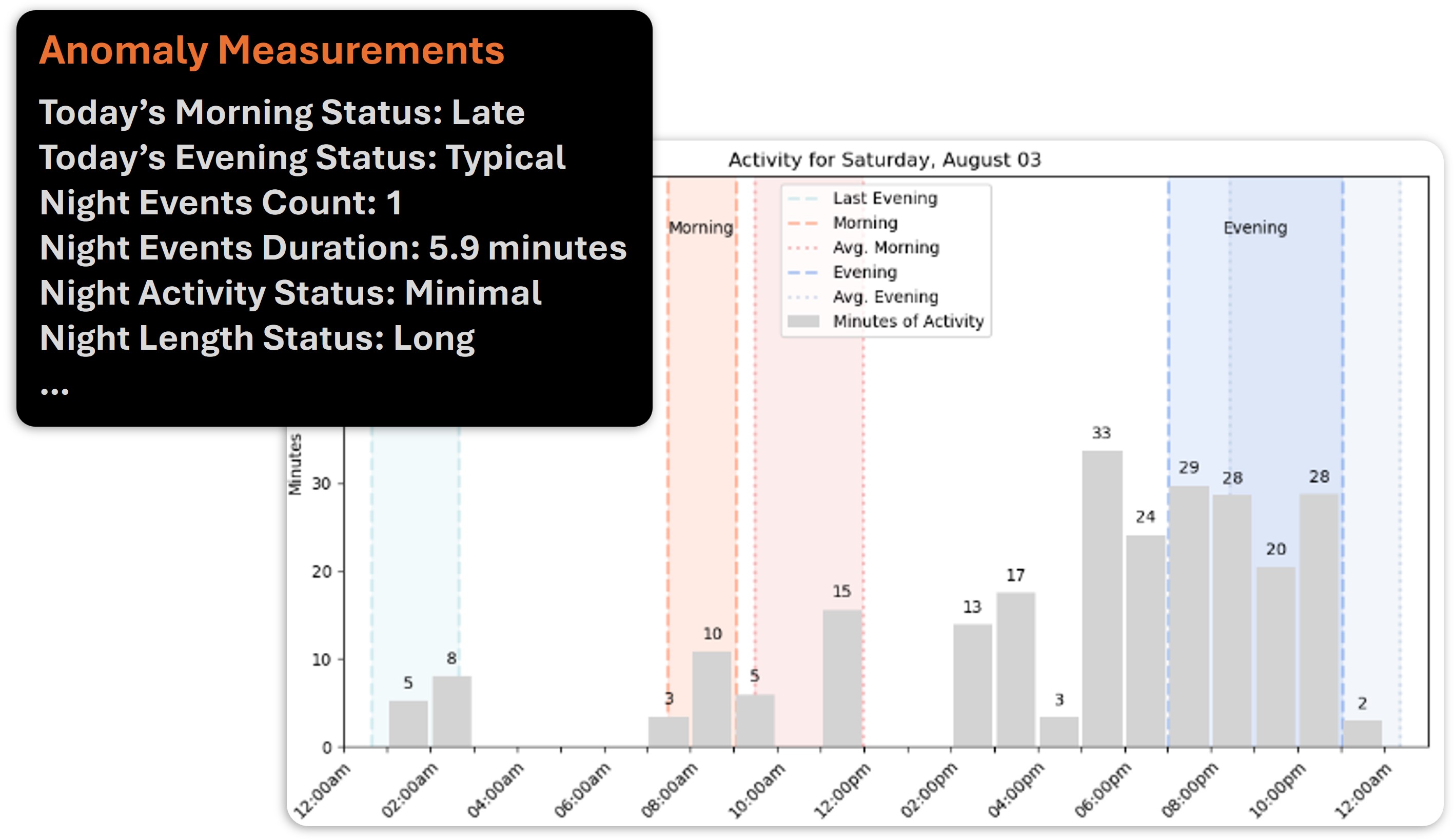

Machine Learning Anomaly Detection

Developed a real-time, interpretable anomaly detection system over noisy time-series data using kernel ridge regression for a San Diego healthtech company.

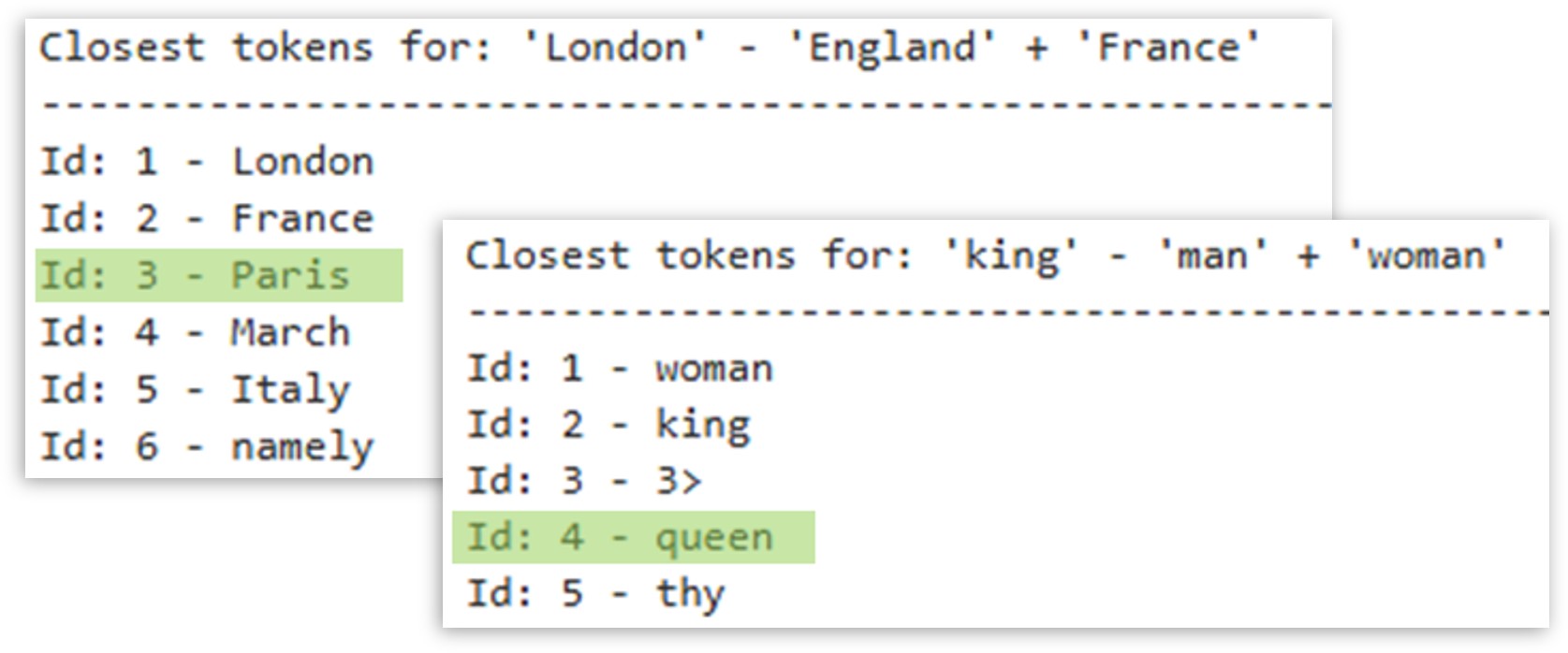

Recreating Word2Vec

Recreated Word2Vec vector arithmetic entirely from scratch, from building a tokenizer to cleaning English Wikipedia and training an embedding model.

Personal

Sabbatical

When I finished banking, I wanted to lock in and study ML.

I considered my options: study at home in Wisconsin or study abroad at long-term Airbnbs and pet sits.

One of these paths seemed more fun.

Note: I also did a lot of work.

Feel free to reach out!

firstlast[at]gmail[dot]com